Strongyloides Hypothesis: Summary Conclusions and What's Next

Now that the long-form article I wrote on the Strongyloides hypothesis is complete, it’s a good time to summarize my current stance on the hypothesis, and what may come next. I think the best way to do that is by following its timeline.

Original Version: Napkin Calculation

The Strongyloides hypothesis (briefly: that Strongyloides worm infections may be responsible—at least in part—for the positive results in ivermectin studies) entered the picture with this Tweet by Dr. Avi Bitterman:

While this might not be the first time this hypothesis was articulated, it is—to my knowledge—the first time it was substantiated with some reasonable statistical analysis to back it up.

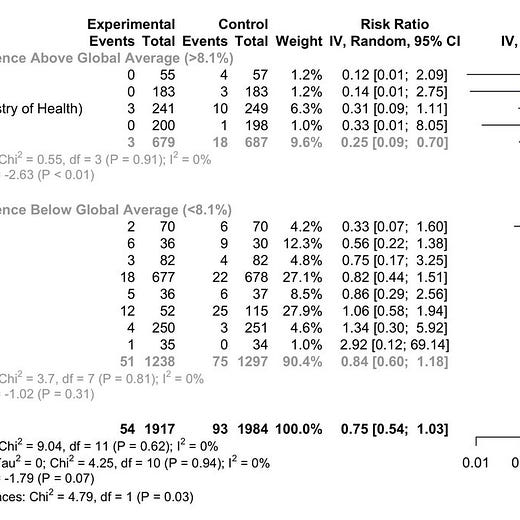

The problem is that the analysis itself presents a p-value of 0.27 in the critical “test for subgroup differences,” meaning that the association is fairly weak and doesn’t meet the standard traditionally considered worthwhile for much more investigation.

This is, however, the version that was initially included in Scott Alexander’s article on ivermectin.

Updated Version: Published in JAMA

About two weeks later, the updated version appeared:

The new version makes important changes:

It adds two new studies (Fonseca, Okumus)

It collapses the three prevalence categories(Low/Medium/High) into two (Low/High)

It uses a different datasource for Strongyloides prevalence, but only for Brazilian studies (TOGETHER, Fonseca)

It adds a sensitivity analysis and meta-regression

As a result of these changes, the case does appear to strengthen:

The effect in high-prevalence regions appears much stronger

The difference between subgroups is now statistically significant at p=0.03

Substantially, this is very close to the version that was eventually published in JAMA.

Adjusting Prevalence Estimates

As discussed in depth in my long-form article, the second version contains a serious flaw. While the paper says it is using only prevalence estimates from sources using parasitological methods, one of its two sources is in fact using an adjusted blend of parasitological and serological methods. Therefore, the results between the two sources cannot properly be used together without adjustment.

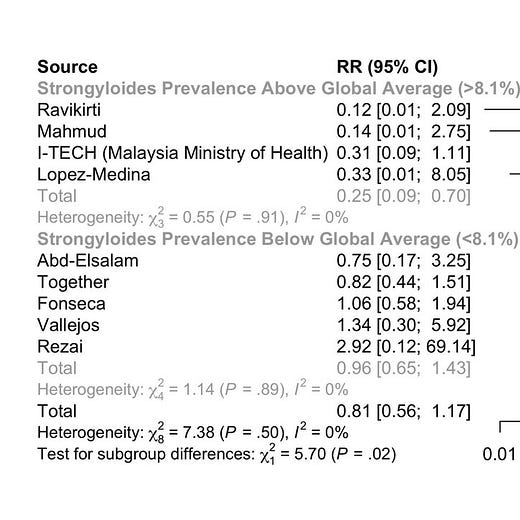

When I did a fairly simple adjustment to line-up the two data sources, the correlation in the dichotomous analysis weakened to the point of being even weaker than the original version (p=0.35), or as a frequentist would say “there now is no difference:”

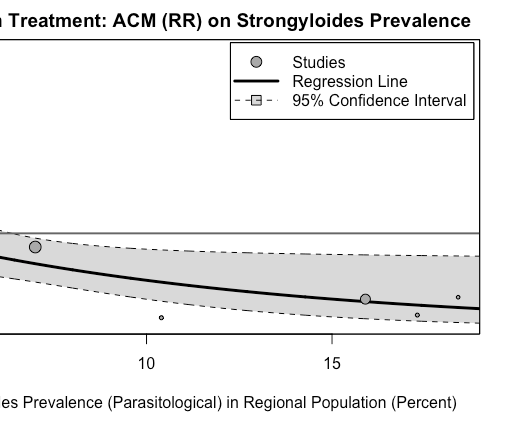

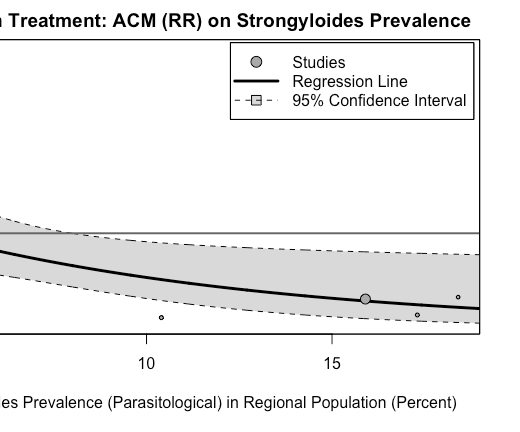

In the meta-regression, similarly—while visually there seems to be some hint of a correlation—by any formal standard, it’s not something that would merit additional attention:

As Dr. Daniel Victor Tausk, who ran the meta-regression, wrote (emphasis mine):

In the JAMA paper they estimated a = −0.0983 with p-value = 0.046 for the test of a=0. I was able to reproduce these numbers exactly (as well as the entire forest plot in the JAMA paper and the recalculated forest plot from your article).

If I rerun the metaregression replacing the JAMA prevalence with your adjusted numbers I get:

a = -0.0517, with p-value = 0.3413 for the test of a=0.

So, the original effect size was reduced and it went from barely significant to not significant at all.

Digging In

In addition, I dug significantly into each of the underlying high-prevalence studies and didn’t really find a smoking gun in terms of a signal for deaths due to hyperinfection:

So the result, after the adjustment, is that the dichotomous analysis boils down to a correlation at p=0.35, with the meta-regression at p=0.37. I’ve found no particularly strong signal in any of the high-prevalence studies. Maybe I could be convinced that one, or two—or even three—of the 18 deaths were due to hyperinfection with future evidence. But as far as I am concerned—after two iterations—the hypothesis is back to being one of many possible hypotheses, but without a substantial evidentiary foundation that would merit particular attention.

What’s Next? Focus On the Pattern

While I can’t rule this hypothesis out—after all, proving a negative is not really possible in open-ended domains—I am also losing my motivation to continue to explore it for its own sake. While there are specific data that technically could come in and make me change my mind, I would be very surprised if they did. Also, continuing to look deeper and deeper, hunting for a signal, is not really proper epistemics, either. At some point, it becomes an exercise in privileging the hypothesis. Out of hundreds of similarly plausible hypotheses, why are we digging into this one? Maybe if we use more recent studies, or if we use a different criterion for the meta-regression, we’ll find a significant effect. Perhaps the picture would change if we also considered observational trials. Anything is possible, and p=0.05 is not a high bar when you can take several bites at the apple.

However, this pattern of reasoning is something that might come back to slow down adoption any off-patent repurposed drug. Perhaps fluvoxamine is useful because it helps with depression, which makes COVID-19 worse! Or perhaps metformin is useful because it helps with diabetes, which makes COVID-19 worse!

Any repurposed generic will always be vulnerable to the criticism that an effect shown against a new condition is not because of direct action against the condition, but because its already-known mechanism of action is helping some subset of patients do better against a new challenge.

Asymmetrically, this is not something that can be levied against a new proprietary drug, since it doesn’t have such any previously known area of application. The “But it’s horse dewormer!” meme can’t really grab people’s attention the same way.

What interests to me is to develop a uniform methodology that can be used to test all these three hypotheses with minimal room for parametric manipulation. In fact, perhaps it can be tried on all repurposed drugs in the c19early catalog. Of the 47 treatments, more than half are likely repurposed. If one of the correlations is substantially stronger than the other ones, then maybe, indeed, there’s something there to be investigated.

But regardless of results, we need to establish a baseline under which such correlations should not be elevated to public consciousness. As we know now, they are extremely likely to be turned into memes, regardless of the strength of the underlying case.

From afar it seems odd how adamant the gatekeepers of acceptable narratives were that there was no effect to quickly adjusting to this fall back position. All while not moving quickly at all to do the work to have a definitive answer which for a drug with such a well known safety profile makes sense to have it on hand if you know you've been exposed, or test positive, etc.

this is interesting. I do know that parasites are far more prevalent than the developed countries give credence to, but that's not the interesting part. Some woman in a FB group who did some alt medicine analysis said that strongyloides was implicated in a lot of the COVID lung pathology back in 2020. If I recall correctly, she said boron would help. I doubt I could find the old post, but it certainly stuck in my mind. I had never heard of strongyloides before then.