Back in November, a bombshell dropped as the original MATH+ paper by Drs. Pierre Kory, G. Umberto Meduri, Joseph Varon, Jose Iglesias, and Paul E. Marik was retracted by the Journal of Intensive Care Medicine (JICM).

An emerging theme of this Substack is the ways in which the social epistemology around the pandemic response collapsed. This case presents a particularly blatant example of a retraction for no apparent reason, with rationales that nobody I’ve spoken to can make heads or tails of.

Here’s how the retraction was covered by the opponents of FLCCC:

Sounds pretty bad. But is it true?

First things first, here’s the retracted paper:

In this article, we’ll go through the retraction notice, as published by the journal, claim by claim. The retraction gives us a pretty neutral summary of the section of the paper under dispute:

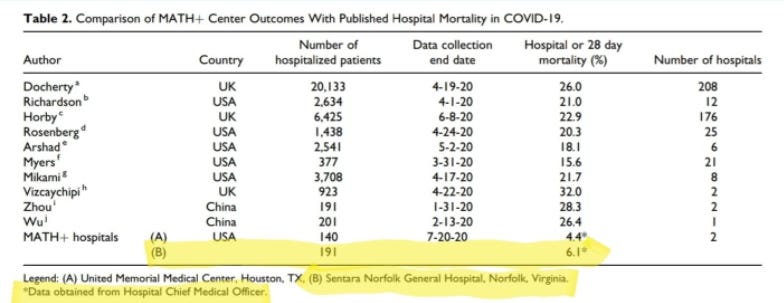

‘The data from Sentara Norfolk General Hospital were presented in Table 2, which lists in-hospital or 28-day mortality rates at the 2 MATH+ centers as compared to 10 published single-center and multicenter reports. The mortality rate among 191 patients at Sentara Norfolk General Hospital as of July 20, 2020 was reported as 6.1%, as compared to mortality rates reported in the literature ranging from 15.6% to 32%. The authors state that these data “provide supportive clinical evidence for the physiologic rationale and efficacy of the MATH+ treatment protocol.”

The main thrust of the accusation is that “the data […] are inaccurate:”

‘The data from Sentara Norfolk General Hospital that [are] reported in this paper are inaccurate. The paper briefly states the methods as: “Available hospital outcome data for COVID-19 patients treated at these 2 hospitals as of July 20, 2020 are provided in Table 2 including comparison to the published hospital mortality rates from multiple COVID-19 publications across the United States and the world.”‘

The heart of the dispute—between the authors and Sentara Norfolk General Hospital—is this table in the paper, comparing "MATH+ Center Outcomes" from two hospitals against "Published Hospital Mortality in COVID-19" from 10 studies.

Sentara Norfolk General Hospital (SNGH), which is represented as (B) in the last row of the table, claims that instead of 6.1% mortality—when looking at the ultimate conclusions of the 191 patients, after the data collection period was over—mortality should actually be 10.5%. An additional eight people died after the end of the study, in addition to the 12(?) that died during the study.

What is MATH+?

To understand what happened, we need to understand what the MATH+ protocol is. MATH+ is a cutesy acronym that represents four “core” therapies: (M)ethylprednisolone, (A)scorbic acid (aka Vitamin C), (T)hiamine, (H)eparin (specifically enoxaparin), and (+), which represents a number of other co-interventions.

The paper describes the then-active version of MATH+ as follows:

This protocol was given to Covid-19 patients at two hospitals, and results from this administration are presented in the paper. These results are the heart of the dispute.

Are The Data Inaccurate?

We have to dive into the rest of the paragraphs of the retraction notice to figure out exactly what it is the hospital means by “inaccurate.” The gist of the accusation seems to be in the following two sections:

‘We have conducted a careful review of our data for patients with COVID-19 from March 22, 2020 to July 20, 2020, which shows that among the 191 patients referenced in Table 2 that the mortality rate was 10.5%, rather than 6.1%.’

‘Apparently […] census and mortality counts from hospital reports [were used] to calculate a mortality rate, but in so doing counted some patients in the denominator but not in the numerator because they died after July 20, 2020, the reported end date of the study. This would be an incorrect calculation of a hospital mortality rate, but might explain the incorrect number of 6.1% in Table 2. Using this incorrect mortality rate to compare with the published reports and claim a “75% absolute risk reduction,” is thus an incorrect conclusion regardless of which mortality rate is used.’

First, let’s pause for a second to consider something surprising. The hospital claims that the mortality rate in the paper (6.1%) is not correct, and instead it should be 10.5%. They also recognize that the comparable studies the authors show list mortality rates between 15.6% and 32%. In other words, even if we take every single thing the hospital tells us at face value, we’re still seeing a very large reduction in mortality, by the hospital’s own data. Given that dead ICU patients are not exactly the sort of thing one can hide from a hospital, we must ask ourselves a question:

Why is the hospital asking for a paper to be retracted that demonstrates—by its own numbers—that it had substantially lower mortality than other comparable hospitals? Isn’t that the sort of thing one brags about?

Secondly, the table isn't central to the paper. The study focuses on explaining why and how MATH+ came about, with a section on each of the medications used. The table is presented at the end, and is clearly described as showing preliminary results from two hospitals using MATH+.

But let’s leave these concerns aside and continue trying to understand exactly what the accusation is.

As discussed above, the hospital took issue with one table comparing overall mortality rates among hospitalized COVID patients at various clinics with those at two hospitals, including Sentara Norfolk General Hospital (SNGH), where the MATH+ protocol was applied.

SNGH complained that the study reported outcomes only up to July 7, 2020, yet using "full followup" data would reveal a mortality rate at SNGH of 10.5%, not 6.1%.

The criticism itself makes no sense: none of the other studies reported "full" mortality rates, since the urge to publish would mean that they did not have all the time in the world to wait for the last patient in each dataset to die or be discharged. Yet somehow, the hospital complained that Kory et al. didn't wait for all the patient outcomes to be known before filing their results.

Indeed, some of the patients hadn't been discharged by the study end, and since some of these patients passed away eventually, the true mortality rate is higher than what was reported. That may sound like a deceptive practice at first, but going through all the other studies listed in the comparison table, it's pretty clear this was standard operating procedure.

From what we can see, the studies broadly used two methods to estimate their cohorts' mortality rates:

Method 1: Deaths by end-of-study date divided by all enrolled patients, including those still in care. Used by e.g. Kory, Docherty, Mikami.

This method will most likely under-report the final mortality rate, since it does not include any post-study deaths, yet uses the full patient count as denominator.

Method 2: Deaths by end-of-study date divided by all discharged or dead patients enrolled in cohort, so excluding anyone still in care. Used by e.g. Arshad and Zhou.

This method will most likely end up over-reporting the mortality rate, since the researchers exclude all non-discharged patients from the denominator.

One study (Hornby), used a third method, calculating "28-day mortality.” It’s hard to fully summarize this method, but it seems to involve complex statistical adjustments to arrive at the final numbers. Suffice to say, it's an outlier.

What is clear, is no one used "full mortality,” i.e. following-up on patients after all outcomes have been resolved. Yet this is what SNGH is demanding of Kory, and this was apparently enough to get the paper retracted.

If we’re missing something, let us know in the comments.

A few more observations from our analysis:

The closest to "full mortality" are the Rosenberg and Wu papers, which had only 3% and 6.5% of patients still in hospital by study end. This means that the final mortality rate is likely to be very close to what those studies reported. Compare this to 33.6% for Docherty, 53.8% for Richardson, 24% for Mikami, 21.1% for Vizcaychipi and 14.9% for Myers (the only other studies which provided these numbers). I don't have the discharge numbers for UMMC, but from SNGH's letter, we can infer that 17.5% of its patients were still in care by Kory's study end. That puts the number of patients still in hospital by study end below the average of the above studies.

Rosenberg seems to offer the best comparison to SNGH, thanks to its low percentage of non-discharged patients. Also, it's one of the few to report mortality for all hospitalized (20.3%) vs. just those in ICU (31.7%). Compare to 10.5% and 24.7-28% for SNGH (using “full” mortality figure).

What About the Other Claims?

The retraction notice also contains a hard-to-interpret section that doesn’t actually seem to be the reason the paper was retracted, but perhaps serves to cast additional doubt on the authors. It states the following:

‘In addition, of those 191 patients, only 73 patients (38.2%) received at least 1 of the 4 MATH+ therapies, and their mortality rate was 24.7%. Only 25 of 191 patients (13.1%) received all 4 MATH+ therapies, and their mortality rate was 28%.’

The paper clearly writes on the same page as Table 2: "...at (Sentara) Norfolk General, the protocol was administered upon admission to the ICU.” The other MATH+ Center—United Memorial Medical Center—systematically provided MATH+ upon admission to the hospital. As such, saying that MATH+ patients had high mortality sounds like it was killing people, until you hear that this mortality refers to ICU patients, which is comparatively low in that context.

Here’s the statement in the paper itself:

So, the authors created a comparison table the best way they could: by comparing all cohorts from day-of-hospitalization onwards, with the best data they had on each.

The fact that SNGH is playing with the numbers is actually easy to see from the retraction notice alone: they make it look like MATH+ actually made things worse, but if we subtract the MATH+ patients from the set of all patients reported, we see that among the remaining 118 patients in the cohort, there were only two deaths.

In other words, if we take SNGH’s words seriously, we'd have to believe not that taking MATH+ makes things worse, but that—only at SNGH—NOT taking it makes you practically invincible to COVID, even though you were hospitalized for it. The alternative interpretation, as the paper clearly states, but the retraction notice omits, is that MATH+ was administered in the ICU, and maybe certain isolated therapies were tried pre-ICU on some of the more seriously ill patients, which would explain why the non-MATH+ patients had pretty great outcomes. They were the people that didn’t end up in the ICU.

If SNGH seriously wanted to allege that MATH+ is associated with 24-28% mortality—and that that should tell us something about the protocol’s effectiveness—it would have to compare to ICU numbers. For instance, in the Docherty study, high-dependency—or ICU patients—had 31.9% mortality at 28 days, with 40.6% still hospitalized at the end of the reporting period. In the Recovery/Horby trial, invasive ventilation was associated with 36.9% mortality at 28 days.

In the Richardson study, ICU had only 22.7% mortality within the 35-day reporting period, which sounds good until you realize that 70.9% of ICU patients were still in the hospital at the end of the reporting period. Only 6.4% had been discharged. We can be practically certain that many of those patients died and were not ultimately discharged.

Was There an Ulterior Motive?

SNGH alleged, and the Journal of Intensive Care Medicine agreed, that the FLCCC authors should have used a method of calculation that would be impossible to follow without waiting much longer than anyone else in the literature waited to publish. The metric would produce a number that is not really comparable, being—by definition—noncomparable to the numbers in the studies with a defined cutoff date. Even so, the results the hospitals wanted the authors to use instead look pretty good compared to the literature, even if we ignore the definitional difference.

In order to make their case, they insinuated that MATH+ patients had worse outcomes than other patients, not revealing that the MATH+ patients were ICU patients. If, indeed, we compare like-for-like with other patients admitted to ICU or similar, those results also look very positive.

It's difficult to avoid the conclusion that the hospital tried hard to get the paper retracted. Why? Well, it may not be entirely irrelevant that the hospital was in the middle of a legal battle with the Director of their ICU, Dr. Paul Marik—one of the authors of the paper—about prohibiting him to use the protocol:

Is that proof of malfeasance? No, but it certainly is highly indicative that something nefarious was going on. At the very least, I would have felt differently about this had the conflict between the hospital and Marik been disclosed in the retraction request to the journal.

The FLCCC has published the email banning Paul Marik from using his preferred treatments:

Why would the hospital interfere in such a way with the practice of medicine? For instance, fluvoxamine, also on that list, is currently recommended by Johns Hopkins Medical Institutes for the treatment of COVID-19. Why was SNGH preventing its doctors from exercising their clinical judgment this way?

Well, it appears that hospitals get an add-on payment for using the "official" treatments (remdesivir, convalescent plasma). Something like fluvoxamine or ivermectin may imply a big financial loss if it displaces those incentivized treatments, and the hospital would have a hard time winning that argument if its own published data show favorable results by using repurposed generics.

It’s hard to parse the regulatory documents, but there seems to be a bonus in the region of $20,000 per patient, so it's not small potatoes (65% of $30,000). Please let me know if there’s additional nuance here, or if the rules have changed since.

In other news, this whole mess is now the penultimate paragraph in Dr. Paul E. Marik's Wikipedia page. This is how easy it is for a bureaucratic organization to smear the reputation of a doctor with a vast publication history.

In November 2021, the Journal of Intensive Care Medicine retracted a paper written by Marik and others associated with the FLCCC, including Pierre Kory. The paper promoted a combination of vitamins and drugs as treatment for patients hospitalized for COVID-19. The combination was called MATH+ by the FLCCC and included methylprednisolone, ascorbic acid, thiamine, heparin, and other ingredients. The retraction was triggered when it was found the paper misreported the mortality figures of hospitalized patients treated with MATH+, falsely making it appear to be an effective treatment.[37][38][39]

Paul Marik has been suspended following his lawsuit against the hospital, and he eventually ended up leaving his position. The legal battle with the hospital is ongoing.

On a positive note, it appears that the bulk of the paper has been published by the Journal Of Clinical Medicine Research, without the section that includes the offending data, presumably to avoid further adventures in numerical definitions. But having done the analysis, we now know that the numbers, as validated by the hospital itself, were actually superior to all the comparable papers cited.

A parting note: this has been a dive into the data from multiple papers, so there may have been something we missed. Please let us know about anything we may have gotten wrong by leaving a comment below and, if we are convinced, we will update the article accordingly.

Appendix - Minor Corrections To The Paper

In the spirit of full transparency and intellectual honesty, we did find some numbers in the table that could have warranted minor corrections or clarifications. For one, the table sometimes uses rates from method 1 and sometimes method 2. Some may claim the authors are misleading here, since 1 will likely under-report mortality and 2 will over-report it. So let’s see if anything big changes when we try to harmonize the numbers.

In two cases (Arshad and Zhou), that couldn't be helped since these only reported rates using the second method. In two others, Richardson and Myers, both figures are available, but Kory's table uses the figure that potentially over-reports mortality.

Swapping the numbers doesn't make a huge difference for Myers, but for Richardson, mortality plummets to 9.7% from 21%! That looks huge, until you see it's based on more than half of the patients (53.8%) still being in care by study end. It looks like neither rate is very useful.

In another instance, for Vizcaychipi, it looks like the table uses the mortality figure from ICU-only instead of all-hospitalized. If we’re right about this, the correct number here should be 26.3%, not 32.4%.

Finally, we couldn't locate where the authors got the 26.4% and 22.9% rates for Wu and Horby. I'm seeing 21.9% and 23.6%, respectively. Again, this doesn't move the needle much.

To sum up, here's the updated version of the table vs. the original. Even with these corrections (which SNGH didn't suggest, to be clear!), the takeaway is not very different. May warrant edits or corrections, but a retraction?

If you want to read what Dr. Pierre Kory, the lead author, had to say about the retraction, the thread below is the place to look:

The lack of integrity in our scientific and medical authorities is a tragedy. So many journals have behaved disgracefully during this pandemic. Thank you for this review and going to bat for these doctors who care about people.

At this point, any attempts at rational discussion with the gatekeepers of authorized approved blessed by God and possessors of the Waters of Life, etc. etc. etc., reflects a touching and naive belief that one can wring justice from, say, the gates of hell when the imps guarding them don't give a half shit.

I'd say start gathering class-action battle-hardened law firms willing to stand up for the rights of man, or whatever, because the horrors looming with the approval of the vax for our tiniest children need everyone of decency to man the ramparts for an extended and quite literally, unfortunately, to the death struggle.

I understand that Dr. Kory's medical license is now under attack. Parents of unvaccinated children will soon find it impossible to enroll them in daycares, preschools etc. and will be desperate when thereby shut out from, basically, all babysitting options.

This is now a disaster of unfathomable depths. What weapons do we have to survive it?